Self-Correcting AI Platform

Verified Legal Content at Scale

Summary

A verification architecture for AI-generated legal content, deployed across six state-level firearms law reference sites. 582 articles covering statutes, case law, and regulatory frameworks, produced and maintained by 25+ specialized AI agents across seven pipelines.

The core design problem: having one AI check another automates agreement, not verification. The solution uses context isolation, primary source enforcement, and multi-dimensional parallel auditing so that no single AI invocation is trusted as the final word.

The first two deep audit cycles extracted 5,617+ verifiable claims, verified 2,064+ against primary legal sources, and reduced critical errors by 61%, with the audit running on a recurring basis since. Sole architect, designer, and developer.

The platform architecture is domain-agnostic and applies to any field where content must be accurate, cite primary sources, and adapt as underlying rules change.

Overview

- 584 articles across 6 state websites, served from a single Laravel monorepo

- 7 specialized skills with 25+ AI agents across build, update, verification, integrity-check, legislative scanning, and indexing pipelines

- Triple audit verification where auditing agents operate in isolated contexts and independently verify claims against primary legal sources

- A local mirror of 871 state statute sections gives the verifier deterministic primary-source grounding. The first full run grounded by it checked every claim across all 584 articles at 100% coverage and returned zero fabricated statute citations across all six states

- Self-improving system that encodes each cycle's errors and corrections back into the pipeline. The audit now runs as a repeating process; the first two cycles cut CRITICAL errors by 61%

- Optimized maintenance path reduced token usage by roughly 80% per state while preserving all verification checks

- Production time: approximately 4 hours per state, compared to weeks of manual work per state

- Config-driven architecture requiring zero code changes to add a new state

- Proactive legislative monitoring via LegiScan API, scanning active sessions for uncovered bills

- Post-deployment integrity verification catching silent cross-state data contamination

Role

- Sole architect, designer, and developer

- Designed the multi-agent pipeline architecture and 25+ agent prompts across 7 skills

- Built the Laravel 12 + React 19 monorepo with config-driven multi-tenancy

- Deployed and operate 6 production sites on a single VPS

- Designed the verification architecture (context isolation, primary source enforcement, triple audit)

- Built the maintenance pipeline that produces database migrations for live content updates

- Built the verification system that runs repeated full audit cycles. The first two extracted 5,617+ claims, verified 2,064+ against primary sources, and cut CRITICAL errors by 61%

- Built a local statute mirror (871 sections across six states) so the verifier resolves citations against primary text deterministically instead of live web fetches; the first full run grounded by it produced zero fabricated citations across all six states

- Built the post-deployment integrity audit system after discovering a silent contamination incident

- Designed the cost optimization layer that reduced routine maintenance by 80%

00. Table of Contents

The accuracy problem with AI-generated content in high stakes domains.

02. The DomainWhy firearms law across six states is the hardest possible test case.

03. The ArchitectureOne codebase, six deployments, zero code changes per state.

04. The Build Pipeline8 agents, 8 stages, from legal research to deployable database seeders.

05. The Verification ArchitectureWhy having one AI check another's work does not actually work, and what does.

06. The Maintenance Pipeline10 agents that audit live content and produce database migrations.

A repeating process. The first cycle found and fixed 18 CRITICAL errors; the second cut error rates by 58%; each pass since finds fewer.

08. The Contamination IncidentA silent failure that revealed a gap in the deployment model.

09. The EvolutionFrom 10 minutes per state to 8 hours per state to 4 hours per state.

10. Cost OptimizationReducing routine maintenance by roughly 80% per state without reducing quality.

11. OutcomeProduction numbers, what the system catches, and where it goes from here.

01. Context

When AI generates content at scale, the default pipeline is straightforward: prompt the model, generate the output, publish it. For blog posts or marketing copy, this works well enough. Errors are low consequence. A wrong adjective does not send someone to prison.

Legal content is different. A wrong penalty range, a misquoted statute section number, or an oversimplified legal standard has real consequences for real people making real decisions. Someone reading a firearms law reference site to understand what they can legally do is relying on that content to be accurate. If the site says the penalty for unlicensed possession is a fine when the statute says it is a felony with mandatory imprisonment, the failure is not editorial. It is structural.

The question I started with was not "can AI write legal content" but "can AI produce legal content that is verifiable, maintainable, and correct at a level where I would trust it enough to put my name on it."

Looking correct and being correct are not the same thing.

The answer turned out to be: not with the standard pipeline. The standard pipeline produces content that looks correct. Looking correct and being correct are not the same thing.

02. The Domain

Firearms law was not chosen at random. I chose it because it is one of the hardest domains to get right, which makes it the best possible stress test for a verification architecture.

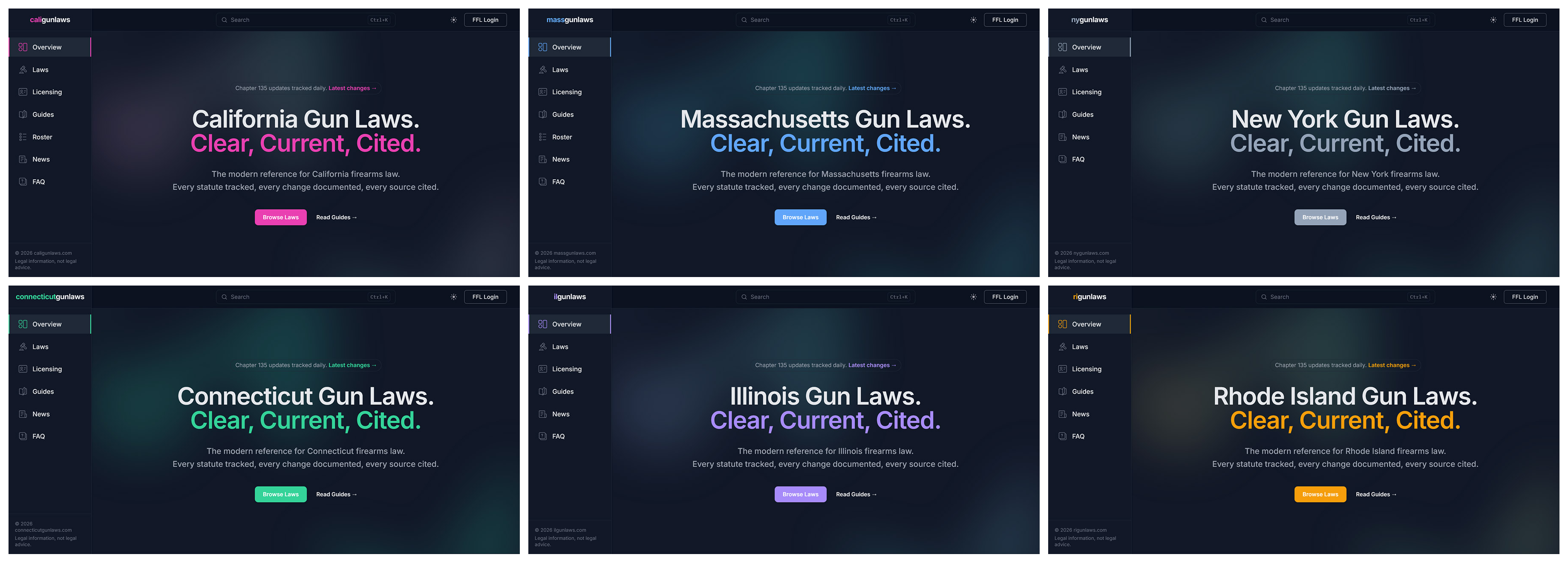

The six states in the platform are Massachusetts, Rhode Island, California, Connecticut, New York, and Illinois. These are the most legally complex and restrictive firearms jurisdictions in the country. Each one structures its laws differently:

Massachusetts

Chapter 140 and 269 MGL. Comprehensive reform via Chapter 135 of the Acts of 2024 rewrote significant portions of the regulatory framework.

California

Provisions distributed across the Penal Code. Certified handgun roster maintained by DOJ. More firearms legislation per session than most states produce in a decade.

Illinois

FOID card system unique to Illinois. Concealed carry framework with its own training and qualification requirements.

New York

Reshaped by NYSRPA v. Bruen, which invalidated the "proper cause" requirement and triggered a wave of responsive legislation.

Connecticut

Own statutory structure, licensing system, and regulatory agencies distinct from neighboring states.

Rhode Island

Own statutory structure, licensing system, and regulatory agencies distinct from neighboring states.

The point is that these six states do not share a common legal architecture. A system that can accurately research, write, verify, and maintain content across all six, where each state's laws are structured differently, use different statutory schemes, and reference different regulatory bodies, is a system that works. If the architecture holds here, it holds in any regulated domain.

03. The Architecture

The platform is a Laravel 12 monorepo with a React 19 frontend, served via Inertia.js. All six state websites run from the same codebase. State-specific differences are handled entirely through configuration.

Each deployment has its own .env file that sets the state code, state name, domain, brand colors, email addresses, and database connection. A central config/state.php file reads these values and makes them available throughout the application via config('state.*'). The frontend receives state configuration through Inertia's shared props, accessible in any React component via a useStateConfig() hook.

Adding a new state requires no code changes. The steps are: create a .env file with the state's values, create a MariaDB database, generate the content (via the build pipeline), run migrations and seeders, set up Nginx and SSL, and deploy. The application code is identical across all six sites.

On the VPS, each site has its own directory, its own database, its own Nginx configuration, its own SSL certificate, its own Supervisor queue worker, and its own cron entry. They all pull from the same Git repository. A code change pushed to GitHub is deployed to all six sites by pulling the latest commit in each directory and running the standard Laravel deployment commands.

The decision to keep six separate deployments rather than building a multi-tenant admin panel was deliberate. Multi-tenancy would have required adding a state_id column to every database table, scoping every query, and building a state-switching UI. The complexity was not justified. Separate deployments with a shared codebase gives the same code reuse benefit without the query scoping overhead, and each site's admin panel operates independently, meaning a problem on one site does not affect the others.

04. The Build Pipeline

The build pipeline (/build-state) takes a two-letter state code and produces a complete set of firearms law articles: researched, written, audited, and assembled into Laravel database seeders ready for deployment.

It uses 8 specialized AI agents across 8 stages. Stage 3c, the claim verifier, was added after the first deep audit discovered fabricated content (a nonexistent executive order in one state, a nonexistent bill number in another). The claim verifier now extracts every statute citation, fee amount, penalty, case name, and regulation from all generated content and verifies each against primary legal sources before the audit stage. Fabricated or incorrect claims are blocking. They must be corrected before the pipeline can proceed.

Initialization

Validates state code, derives all file paths, creates working directories, checks for existing progress. Enables --resume flag to continue from last completed stage.

Research

Identifies primary statutes, searches for recent legislation and court decisions, finds primary source URLs on official government websites, maps the legal landscape across 11 topic areas.

Planning· Human checkpoint

Produces categories, tags, 35 to 80 planned articles, FAQ entries, and glossary terms. Reviewed before any content is written. Items can be added, removed, or modified.

Writing

Processes one seeder group at a time. Every article written in structured content doc format with full HTML body, inline citations, and complete metadata. Content doc is human-reviewable, machine-parseable, diffable in Git, and persistent on disk across long sessions.

FAQ + Glossary

Writes FAQ entries and glossary terms as PHP-ready arrays, matching the existing FaqController and GlossaryController formats.

Claim Verification

Extracts every statute citation, fee amount, penalty, case name, and regulation from all content docs. Verifies each against primary legal sources. Claims rated VERIFIED, FABRICATED, WRONG, or UNVERIFIABLE. Fabricated or wrong claims block progression to Stage 4.

Triple Audit, Parallel

Three independent auditors with isolated contexts run simultaneously. Each re-reads primary legal sources independently. No shared context with the writer or each other.

Assembly

Converts audited content docs into PHP seeder files conforming to existing Laravel seeder patterns.

Verification and Commit

PHP lint, TypeScript checks, build verification, Git commit, push, and deployment instructions.

05. The Verification Architecture

The obvious approach to verifying AI-generated content is to have another AI check it. Most teams building AI content systems do exactly this: the writer generates content, a reviewer reads the output and confirms it looks reasonable. The problem is that this does not actually verify anything. It automates agreement.

When a verifier reads the writer's output in the same context, it is already primed by the writer's framing. If the writer says "the penalty for unlicensed carry is a Class A misdemeanor punishable by up to one year imprisonment," the verifier reads that claim, finds it plausible, and confirms it. What the verifier does not do is open the actual statute and read the penalty section independently. It trusts the writer's paraphrase.

This is the fundamental design problem the platform solves through three architectural decisions.

05.01. Context isolation

The auditing agents do not inherit the writing agents' output as context. They receive the content to audit and the tools to verify it, but they do not see the writer's reasoning, the research brief the writer used, or the intermediate decisions the writer made. They operate with a fresh context window. This means the legal auditor cannot be influenced by the writer's framing. It must form its own understanding of what the statute says.

05.02. Primary source enforcement

Every agent in the chain is instructed to read the actual legal text, not news articles, not secondary summaries, not the previous agent's paraphrase. The legal scanner discovers changes via news, then verifies by reading the statute. The writer reads the statute to write the article. The legal auditor reads the statute again independently to verify the article. Three separate reads of the same primary source by three agents that do not share context. If a news article oversimplified the law and the writer inherited that simplification, the auditor catches it because the auditor is reading the statute, not the news article.

05.03. Multi-dimensional parallel auditing

Legal accuracy, citation integrity, and SEO compliance are three different quality dimensions. A single agent checking all three would experience attention degradation across dimensions. The platform runs three separate auditors simultaneously, each focused on one dimension, each with its own prompt, tools, and output format.

During one state's maintenance run, this verification layer caught a wrong live-fire scoring requirement (the writer said "7 out of 10 per distance" when the statute specifies "21 out of 30 total"), an incorrect oral argument date ("March 10-11" when it was "March 10"), and overlong titles on two new articles. All four issues were auto-corrected before the migrations reached the live database.

05.04. Deterministic primary-source grounding

Primary source enforcement only works if reading the primary source is reliable. Early on, every verification run re-fetched statute text live from six different state-legislature sites. That was slow, token-heavy, and non-reproducible: rate limits, JavaScript shells, and bot blocks meant the same audit could read different text on different days, or fail to read it at all.

The fix was a local, git-tracked mirror of the cited statutes: 871 firearms-relevant sections across the six states (California 203, Illinois 187, Rhode Island 130, Connecticut 121, New York 114, Massachusetts 116), each stored as a Markdown file with deterministic frontmatter and queried through a state-lookup CLI. Statutes change rarely, so mirroring the cited ones gives a stable, reproducible base; the audit now hits the network only for things that genuinely move, court dockets, pending bills, news. The mirror is byte-identical across machines: a re-parse on the server reproduces the committed file exactly.

The payoff showed up on the first full verification run grounded by the mirror. Across all 584 articles and roughly 5,200 claims, checked at 100% coverage with no sampling, the citation gate returned zero NOT_FOUND across all six states: every statute the content cites resolves to real primary text. The architecture that began by catching a fabricated executive order now provably produces zero fabricated statute citations.

06. The Maintenance Pipeline

Building content is half the problem. Laws change. Links break. Content goes stale. A legal reference site that is accurate on launch day and wrong six months later is worse than no site at all, because users trust it based on its initial accuracy.

The maintenance pipeline (/gl-update) uses 10 specialized agents across 7 stages. It audits an existing state's live content and produces Laravel database migrations to update the live database.

The key architectural decision is that the maintenance pipeline produces migrations, not seeders. Seeders create records from scratch and are used for new databases. Migrations modify existing records and are used for live databases. This distinction matters because migrations preserve article IDs, revision history, and foreign key relationships. They track which changes have been applied so the same fix is never run twice. And every article update creates a revision record in article_revisions, preserving the full history of changes.

Scan, Parallel

Four specialized scanners run simultaneously: legal scanner searches for new legislation and court decisions; link scanner checks every source URL; freshness scanner reads all content docs for staleness signals; SEO scanner checks for title and summary drift.

Triage· Human checkpoint

Maintenance planner reads all scan outputs and produces a prioritized plan. Each item is self-contained and can be approved or rejected independently. Every item is reviewed before processing begins.

Write

Maintenance writer produces the content updates in small batches, working from the approved plan or, with the --verify flag, from gl-verify's fix recommendations. Each change is a targeted, diffable edit, not a freehand rewrite.

Triple Audit, Parallel

Three independent auditors with isolated contexts run simultaneously, each re-reading the primary legal source. This is the stage that catches errors in the recommendations themselves, including mistakes made by the verification system that produced them.

Assemble

Migration assembler converts the audited content into Laravel migrations rather than seeders, preserving article IDs, revision history, and foreign keys. PHP lint and schema validation run before anything is committed.

Verify and Deploy

PHP lint, the deterministic citation gate, Git commit and push, deployment to the live database, then a re-run of the citation resolver on the deployed site. A fabrication flag fails and rolls back the deploy. Every migration commit carries a config('state.code') guard.

Cross-state verification

After deployment, the orchestrator SSHes into all six production servers and verifies that no articles leaked across state boundaries. Added after the contamination incident.

The pipeline also updates the seeders and content docs to stay in sync with the database, so a fresh install from seed produces the same result as a migrated database.

07. The Deep Audit

The build pipeline and maintenance pipeline verify content at generation time. But the build pipeline's early versions were less rigorous, and four states worth of content had been generated before the verification architecture reached its current level. I needed to answer a specific question: how accurate is the content that is already live?

I built /gl-verify, a dedicated verification skill with 6 specialized agents that performs claim-by-claim verification of every factual statement across an entire state's content.

The process runs in stages. First, claim extractors parse every article and extract individual verifiable claims: statute citations, penalty amounts, fee figures, case names, effective dates, agency roles, and procedural facts. Each claim is categorized by type and assigned a verification priority. Statute citations, fees, and penalties are Priority 1 and are all verified. Case law and legal status claims are Priority 2. Agency roles and procedural facts are Priority 3 and are sampled.

Then three parallel verifiers (statute-verifier, case-verifier, fact-verifier) independently check claims against primary sources. A consistency checker cross-references claims across articles to find contradictions where different articles cite the same statute but state different facts.

On March 15, 2026, the deep audit ran its first full cycle across all six states. It found 18 CRITICAL errors across 446 articles, the kind of errors that could have caused someone to misunderstand their legal obligations.

The errors were concrete. In one state, a chain of articles had statute sections assigned to entirely wrong offenses, so someone researching one regulation would have landed on an unrelated one. A fabricated bill number was cited as the source for a roster change when the real legislation was a different bill from a different year. A safe-storage age threshold was listed as "under 16" when the statute specifies "under 18," which would have told an owner they had no obligation for 16 and 17 year olds. A felony offense was listed one class too low, understating the sentencing range.

All 18 CRITICAL errors and all HIGH-severity errors were fixed via /gl-update the same day. The deep audit produced fix recommendations formatted to feed directly into the maintenance pipeline. 80 total fixes were applied across all six states, touching 76 articles.

The most important finding was the self-correction pattern. In 3 of 6 states, the maintenance pipeline's Stage 4 audit caught errors in the deep audit's own recommendations. In one state, the deep audit recommended changing a section count from "159" to "166." The Stage 4 legal auditor verified that the original "159" was correct and reverted the change. In another, the deep audit recommended changing a persistent felony offender maximum from "life" to "25 years." The Stage 4 auditor verified that the maximum is life imprisonment and rejected the item. In a third, the deep audit recommended changing a penalty cross-reference. The Stage 4 auditor verified that the original citation was more accurate and blocked the change.

No single AI invocation in this system is trusted as the final word. The adversarial structure, where separate agents with separate contexts check each other's work, prevents error propagation even when the error originates from the verification system itself.

Three days later, after 79 new legislative session articles and 10 new guide articles were deployed, the deep audit ran a second full cycle across the entire corpus. CRITICAL errors fell 61%, from 18 to 7, and total findings dropped as well, even though the corpus had grown. The state that had been the worst in the first cycle showed the largest gain once its CRITICAL errors were resolved. All findings were fixed the same day.

The deep audit is now a repeating process, not a one-time event. Each cycle finds fewer errors than the last, and each cycle's corrections are encoded back into the pipeline. The system improves with every pass.

08. The Contamination Incident

March 14, 2026 · Silent failure detected

5 state-specific articles leaked onto all 6 production sites. Migrations had no config('state.code') guards, so when they ran on each site's database, the articles appeared everywhere. A Massachusetts visitor could have read Illinois penalty ranges and believed they applied in Massachusetts.

The failure was silent. No errors, no failed migrations, no broken pages. The articles rendered correctly. They were just on the wrong sites. It was caught manually.

Response: built /gl-check, which SSHes into each production server and runs four database integrity checks: cross-state article contamination, orphaned records, article count sanity checking, and migration sync verification across all six sites. A full audit completes in under a minute. All future migrations that insert or modify state-specific articles must include config('state.code') guards, enforced during Stage 6 verification before any commit.

The incident was a failure. The response was to encode the detection into the architecture so it cannot recur silently. That pattern (encounter a failure, encode the prevention into the system rather than relying on manual vigilance) recurs throughout the platform's development.

09. The Evolution

When I first built the build pipeline, a state's content could be generated in about 10 minutes. The system prompted, generated, and returned output with minimal verification. The content looked good. It was plausible, well structured, and read like competent legal reference material.

The problem was that "plausible" and "correct" are not the same thing. The 10-minute run meant the system was not actually verifying anything. It was generating content and publishing it, which is exactly the pattern that fails in high stakes domains.

10m

No verification. Plausible output, published immediately.

8h

Full verification added. System became rigorous.

4h

Optimized routing. Rigorous and efficient.

The system then grew to approximately 8 hours per state as verification layers were added. That time was consumed by real work: the legal scanner running WebSearch and WebFetch across every topic area, the link scanner hitting every URL with rate limiting, the legal auditor independently fetching and reading every statute cited in every content update, the citation auditor doing the same for every URL, the migration assembler reading reference files and validating schema.

After analyzing where tokens were being spent across multiple full pipeline runs, I designed an optimized routing system that distinguishes between tasks that need full verification and tasks that do not. The current version runs in approximately 4 hours per state for a full pipeline, and routine maintenance cycles are significantly faster.

A system that runs fast and produces output is easy. A system that slows itself down to verify and then speeds itself back up by eliminating waste is the harder design problem.

10. Cost Optimization

A full maintenance pipeline run is token-intensive per state, and across six states the monthly cost adds up. It is sustainable but not optimal, because a significant portion of those tokens are spent re-discovering issues that were already documented in previous runs.

The insight that drove the optimization: P3 and P4 maintenance items (stale temporal references, missing metadata, SEO improvements, tag cleanup) fall into two categories that need fundamentally different handling.

Metadata changes (tags, who_affected, action_needed, seo_description) are database field updates. They cannot introduce legal inaccuracies. Running a 10-agent pipeline to produce UPDATE articles SET who_affected = 'All LTC holders' is waste.

Content changes (thin content expansion, temporal language fixes, citation formatting) touch article body text and could introduce errors. They need at least one audit pass, but not three.

100%

token budget · full pipeline

20%

token budget · optimized maintenance (80% reduction)

The result: routine maintenance dropped by approximately 80% per state. The verification and safety checks that matter (legal accuracy on content changes, PHP lint, cross-state contamination detection, schema validation) are preserved. The checks that do not matter for the task at hand are skipped.

The quality of the output is identical. The same migrations are produced, the same content is written, the same safety checks run. The only difference is that the system stops paying to re-discover issues it already documented.

11. Outcome

The platform serves 584 articles across 6 states from a single monorepo:

| State | Domain | Articles | Method |

|---|---|---|---|

| Massachusetts | massgunlaws.com | 110 | Manual (24 sessions), then /gl-update + /gl-verify (repeated) |

| Rhode Island | rigunlaws.com | 79 | Manual (24 sessions), then /gl-update + /gl-verify (repeated) |

| California | caligunlaws.com | 126 | /build-state, then /gl-update + /gl-verify (repeated) |

| Connecticut | connecticutgunlaws.com | 70 | /build-state, then /gl-update + /gl-verify (repeated) |

| New York | nygunlaws.com | 101 | /build-state, then /gl-update + /gl-verify (repeated) |

| Illinois | ilgunlaws.com | 98 | /build-state, then /gl-update + /gl-verify (repeated) |

Massachusetts was the first site, built manually over 24 sessions. That manual process informed the design of the build pipeline. Rhode Island was the second manual build. California, Connecticut, New York, and Illinois were built by the automated pipeline.

Across the first two deep audit cycles, the system extracted 5,617+ verifiable claims, verified 2,064+ against primary legal sources, found 25 CRITICAL errors (18 in the first, 7 in the second), and applied 126 total fixes across all six states. CRITICAL errors dropped 61% between runs, and the audit has run on a recurring basis since. The Stage 4 audit caught errors in the deep audit's own recommendations, including cases where the deep audit was wrong and the original content was correct.

The most recent full run is the first grounded by the statute mirror. It verified every claim across all six states at 100% coverage with no sampling, roughly 5,200 claims across 584 articles, and returned zero fabricated statute citations: every citation resolves to real primary text. The findings it surfaced were precision errors in penalty and threshold wording, queued for same-session correction, which is exactly what the system exists to catch.

The system operates on seven skills:

/build-state

Generate complete content for a new state

9 agents

/gl-scan

Scan for legal changes across 7 vectors: bills, court rulings, regulations, effective dates, federal impacts, ballot measures, executive actions

7 vectors

/gl-update

Audit and update live content via database migrations

10 agents

/gl-verify

Claim-by-claim verification against primary legal sources

6 agents

/gl-check

Cross-state data integrity verification

orchestrator

/gl-status

Read-only pipeline status dashboard

read-only

/gl-index

Indexing diagnostic: GSC coverage, orphan detection, internal-link suggestions

3 agents

Legislative monitoring runs through the /gl-scan skill, which queries the LegiScan API for active firearms-related bills in each state's legislative session, cross-references existing coverage, and generates articles for any gaps. It is one of seven scan vectors that also cover court rulings, regulatory changes, effective dates, federal impacts, ballot measures, and executive actions. The first scan across five states produced 79 new session bill articles. Content QA caught 7 CRITICAL hallucination errors in the generated articles before they reached production. The same verification architecture that protects the original build pipeline protects the legislative scanner.

The platform architecture is domain-agnostic. The same pattern of research, plan, write, verify, audit, deploy, maintain, monitor, and deep-verify could apply to any domain where accuracy matters, content must cite primary sources, and the underlying rules change over time. The agents would need different prompts and different source hierarchies, but the pipeline structure, the triple-audit pattern, the claim verification layer, the content doc intermediate format, the migration system, the deep audit verification, the cost optimization routing, and the post-deployment integrity verification would remain identical.